Publications

Cheaper free-viewpoint video using 3D skeletons

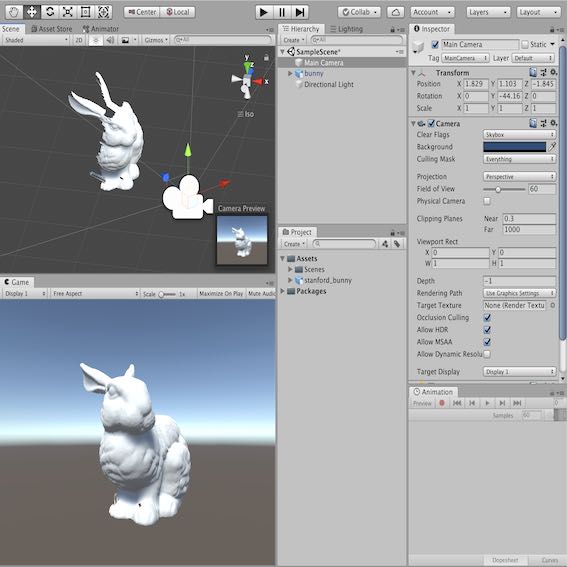

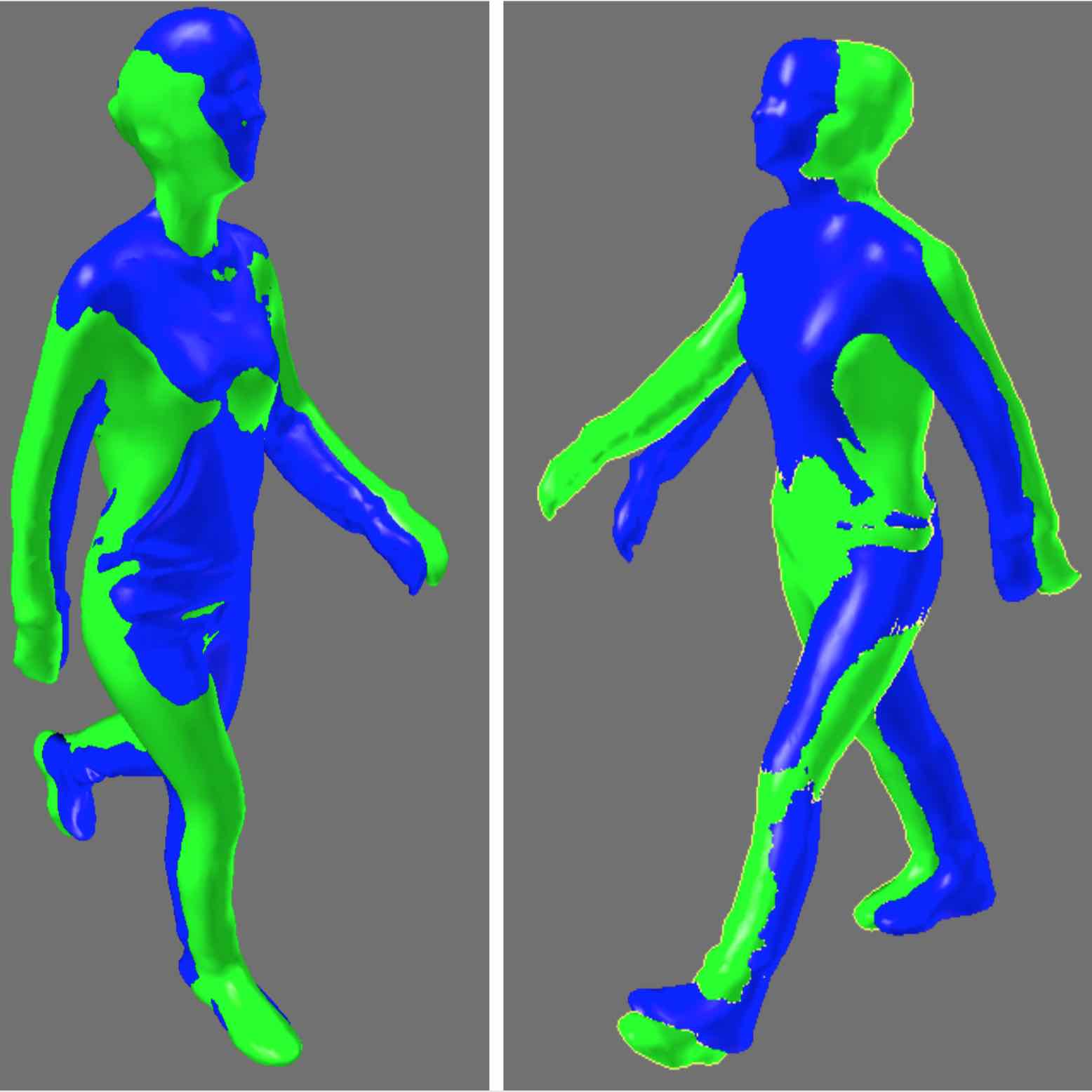

Producing volumetric video can be expensive due to the amount of time it takes to process the 3D meshes. This project, done while I was a Researcher in Residence at Digital Catapult, looks to reduce this cost using 3D skeleton tracking. We use skeletons before 3D reconstruction to identify ways to loop and sequence clips of volumetric video so fewer frames need to be processed. Our hope is that this technique can also be used to aid real-time, on-set decision making, further reducing costs from re-shoots. I presented a poster on preliminary findings from this project at IEEEVR 2020 (open access).

Assessment of volumetric character eye-gaze in HMDs

In volumetric video, eye gaze presents many issues. If a filmed character is looking at the user and the user moves, for example, the best way to ensure eye lines remain correct is an open question. To address these issues, one of the fundamental aspects we must understand is how accurately users can assess where a volumetric character is directing their gaze. At IEEEVR 2019 I presented the paper Perception of Volumetric Characters' Eye-Gaze Direction in Head-Mounted Displays, written with Prof Anthony Steed. This paper investigates how accurately people can gauge where a volumetric avatar is looking.

Transitions in multi-view 360-degree media

At IEEEVR 2018 I presented the TVCG paper The Effect of Transition Type in Multi-View 360-Degree Media, written with Prof Anthony Steed. In this paper, we looked at the impact of the transition type a user sees while navigating a scene captured as multi-view 360-degree media. Aspects measured included spatial awareness, preference, and the subjective feeling of moving through the space.

Cinematic virtual reality

At IEEEVR 2017 I presented the paper Cinematic virtual reality: Evaluating the effect of display type on the viewing experience for panoramic video, written with Prof Anthony Steed. Several metrics were explored that may indicate pros/cons of cinematic virtual reality compared to traditional formats. We explored viewing in three display systems: a HMD, a SurroundVideo+ (pictured), and a standard 16:9 TV. Aspects examined included spatial awareness, narrative engagement and enjoyment.

Object removal in 360° media

As part of my work with panoramic video, I presented a paper investigating object removal in 360° media - written with Prof Anthony Steed - at the Conference on Visual Media Production (CVMP). The work also included helping out on a couple of 360° film shoots for BBC R&D.